The key to good ETL design is performance and accuracy. Google Cloud Dataflow: Enables you to create serverless ETL processes to store data on the Google Cloud Platform (GCP).AWS Glue: Enables you to design ETL from unstructured and structured data to store on S3 buckets.Informatica PowerCenter: Gives end users the tools to import data and design their own data pipelines for business projects.Talend: Offers an open source GUI for drag-and-drop data pipeline integration.Structured data requires more formatting than unstructured data, so any out-of-the-box tools must integrate with your chosen database platform. The way you store data depends on if you need unstructured or structured data. Database engines often come with their own ETL features so that businesses can import data. ETL Tools and Technologiesįor large data pipelines, most organisations use custom tools and scripts for ETL. Businesses might not see changes to data for several hours, but current ETL technology provides updates to data so that analytics can reflect recent changes to trends. Traditionally, data imports were batched and ETL was slow. Businesses could pull data from numerous locations and automatically prepare it for internal analytics, which also powers future business decisions and product launches.ĮTL speeds up data updates, so it benefits businesses that need to work with current or real-time data. It’s highly likely that all sources will create duplicate records, but an ETL process will take the data, remove duplicates, and format the data for internal analytic applications. For example, a business working with traffic analysis could pull data from several different government sources. Raw data without any transformation is generally useless for analytics, especially if your business uses similar data from several sources. A well-designed ETL process does not need many changes and can import data from various sources without manual interaction. Some ETL processes could be a weekly or monthly occurrence, and most database engines offer a scheduler that runs on the server to run tasks at a set time. Once an ETL process is designed, it runs automatically throughout the day. ETL often runs several times a day for larger corporations, so only new data is added without affecting current application data already stored in the database. Data must be preserved and duplicates avoided, so the load step must take into account incremental changes every time the ETL process executes.

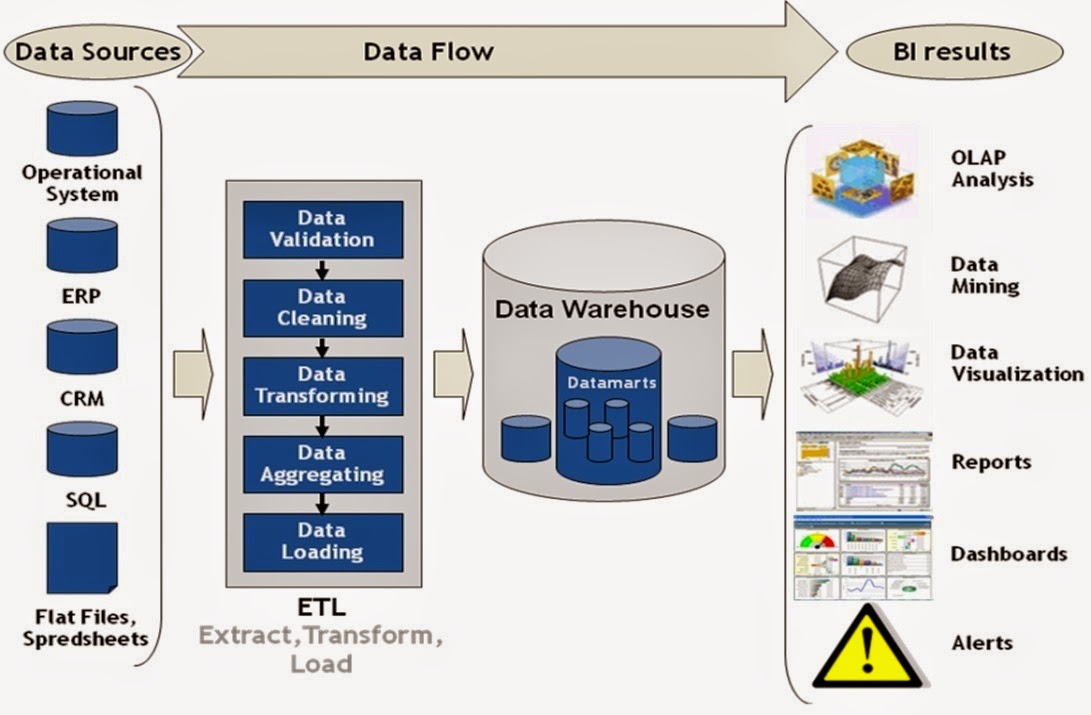

Load: After transformation, data is sent to the data warehouse for storage.For example, a phone number might be stored with or without hyphens, so the transformation process either adds or removes hyphens prior to being sent to storage. Data is formatted so that it can be stored. Duplicate data can skew analytic results, so duplicate line items are removed prior to storing them. Data must be formatted, filtered, and validated before it can be sent to the data warehouse. Transform: Business rules and the destination storage location define transformation design.Because sources could have various formats, the first step in ETL pulls data from a source for the next step. Sources could be from an API, a website, another database, IoT logs, files, email, or any other ingestible data format. Extract: Raw data for extraction could come from one or several sources.Design of an ETL process addresses the following three steps: Database administrators, developers, and cloud architects usually design the ETL process using business rules and application requirements. The ETL ProcessĮTL has three steps: extract, transform, and load. The log data for ETL events could also go through its own data pipeline before being stored in a data warehouse for administrative analytics. Administrators responsible for the databases storing ETL data manage logging, auditing, and backups. Data can be structured or unstructured, or it could be both.Īfter the ETL process happens, the data is stored in a data warehouse where administrators can further manage it. The ETL process is a group of business rules that determine the data sources used to pull data, transform it into a specific format, and then load it into a database. What Is ETL?ĭata pulled from various sources must be stored in a specific form to allow applications, machine learning, artificial intelligence, and analytics to work with it. The process logic and infrastructure design will depend on the business requirements, data being stored, and whether the format is structured or unstructured. Extract, transform, and load (ETL) is an important process in data warehousing when businesses need to pull data from multiple sources and store it in a centralized location.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed